I’ll freely admit that I don’t need to use a lot of maths1 in my day job: pretty much the only things I really need to understand are basic statistics and, occasionally, how to run a t-test to see whether an optimisation has actually improved things (though I guess I don’t even need to understand the maths for that one).

But sometimes maths ends up happening anyway, and it can be interesting when that happens. Here’s one such story:

Let’s say that we have some frontend service that we’d like to understand the performance of in a production configuration. Maybe we want to understand its RAM usage under load, or the latency for some given types of requests, that kind of thing.

In our example system, we’ll send requests to a single frontend task, but there’s a wrinkle: that frontend uses a sharded or replicated backend RPC service to service these requests, and the frontend will only connect2 to a given backend task when needed. That connection setup will take a little extra time whenever it occurs, which we don’t want to count in our test.

If we keep sending requests, then eventually the frontend task should have connected to all the backend tasks, and we’ll have a steady-state we can start analysing.

The question then is, how long will it take for our system to set up all of these connections and reach that steady-state? Or, more precisely, how many warm-up requests do we need to send before we can start our real measurements?

Let’s say that our frontend has a hundred backend tasks that it can connect to, and that we’ll model the selection of each backend task as uniformly random for each request. Do we need to send a few hundred requests to our frontend before all of those backends are connected, or a few thousand? Or more?

Incidentally, when I did something like this for real, I used a pragmatic solution instead: keep sending requests until the metric I was monitoring looked like it had settled down, then start measuring. But it did make me wonder how we might go about calculating this mathematically.

Back-of-the-envelope maths time: we know that it has to be at least 100 requests, since we can only make at most one connection to a backend task for any given frontend request. We also know that doing it in exactly 100 requests should both be possible and extremely unlikely:

- The first request we send will definitely trigger a connection to some backend task, since we haven’t made any connections at all yet.

- The second request will probably make another connection. Since we have 100 backend tasks, the chance is only $1 / 100$ that we pick the same backend as we used for the first request, so in $99 / 100$ cases we’ll connect to a new backend task.

- The third request will connect to a third backend in $98 / 100$ cases, and so on.

After we’ve sent that 100th request, the chance that we’ve connected to all 100 backends is therefore:

$$ \eqalign{ &\frac{100}{100} \times \frac{99}{100} \times \frac{98}{100} \times \dots \times \frac{2}{100} \times \frac{1}{100}\cr = &\frac{100!}{100^{100}} } $$

… which is a very small number: about 1 in $10^{42}$.

So if we want to have a high chance of having connected to all of the backend tasks, we’ll definitely need more than 100 requests, but it’s still not very clear how many: in theory, it could take an unbounded number of requests, since we might never pick all 100!

It turns out that this problem has a name: it’s the coupon collector’s problem, based on the idea of collecting all of $n$ different coupons by drawing randomly, one-by-one.

Calculating the expected number of requests we need is fairly straightforward: we can just sum the expected number of requests we need to make each new connection:

- For the first connection, we always need exactly one request.

- For the second connection, the probability of making a new request is $99 / 100$, so the expected number of requests we need is $100 / 99$.

- For the third connection, the expected number of requests is $100 / 98$, and so on.

To make the last connection, we expect that it’ll take $100 / 1$ or 100 requests, which makes sense: we have to pick the one unconnected backend from 100 options (in the same way that on average you’d expect to need to make six die rolls on a regular six-sided die in order to roll some pre-chosen number).

So, as Wikipedia tells us, if we calculate3

$$ \sum_{k=1}^{n} \frac{n}{k} $$

for n=100, we get about 518.74, which is the expected number of requests we’d need to send.

But what does this number actually tell us? If we send 519 requests, does that mean that we’ll probably have connected to all of the backends? Or that we have exactly a 50% chance of having done so? How many requests do we need if we want to have a 95% chance of having connected to all of the backends, say?

What we’ve just calculated is the expected value, and is an average of the number of requests.

In other words, if we were to run this experiment a large number of times, counting the number of requests until we successfully connected to all the backends ($C_1, C_2, C_3, \dots$), then the expected value is simply the average of those counts.

Since some of these counts might be quite large, this expected value isn’t actually that useful for thinking about the number of requests we’re going to need to send. What we really want to know is what the actual distribution looks like.

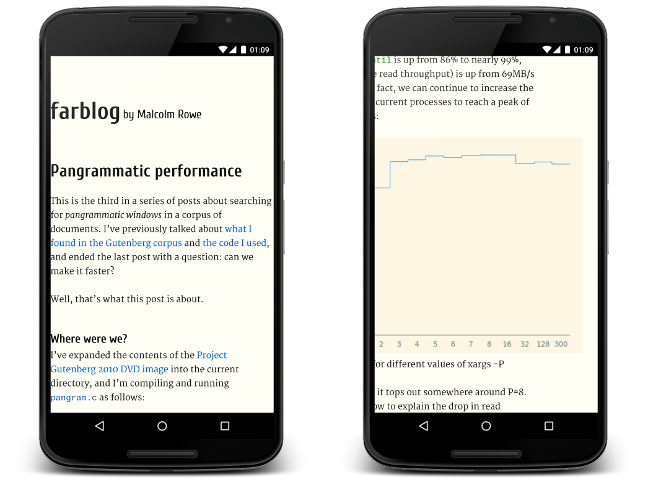

Since randomly picking those last few backends takes quite a few requests, the distribution is heavily skewed to the right. To cut to the chase, it looks like this:

For every point $x$ on the x-axis, the height of the curve shows the probability of collecting 100 coupons — or connecting to all 100 backends, in our example — in exactly $x$ attempts, so for $x = 100$ we have the very small (but non-zero) probability we calculated earlier, while for $x \lt 100$ (the hatched area) the probability is zero, as those values are impossible.

The expected value (518.74) we calculated above is marked, as are the median (497) and 95th percentile (754). All of these are larger than the mode (460), which is the most-likely number of attempts we’d need.

This graph also has non-zero values everywhere for $x \geq 100$, though it drops off quickly: the probability that we’d take more than 1000 attempts is only 0.4% or so, for example.

Wikipedia goes into the details of how to calculate this distribution exactly, though I don’t feel competent enough to explain it here. One useful nugget, though, is that we can estimate some of the statistics if we map this distribution to what is apparently called a Gumbel distribution:

- The mode for any given $n$ coupons is approximately $n \ln{n}$.

- For any percentile $p$, we can compute $k_p \approx n \ln{n} - n \ln{\left(-\ln{p}\right)}$.

For example, for $n=100$ and $p=0.95$, this gives a mode of 460, which is correct, and a 95th percentile of 758, which is pretty close to the real value of 754.

In this example, we could be very sure (~99.57%) that if we were to send about a thousand requests, we’ll have connected to all of our backends.

So, the next time you need to work out how many requests it takes to warm up a cache or fill a connection pool, you could use this to work out some magic numbers. But there’s an important caveat for this specific example: all of the above relies on our initial assumption that picking a backend is uniformly random.

In our non-spherical-cow world, load balancers don’t pick backends at random, and instead use a round-robin or similar strategy that would most likely end up actively picking the unconnected backends, significantly reducing the number of requests we actually need to connect to everything.

Maths gives us an interesting worst-case upper bound, but it also suggests why the pragmatic solution was a better idea: sometimes it’s just easier to watch your latency metrics until they flatten out, and let the maths happen in the background.

-

or “math”, for y’all in en-US. ↩

-

Perhaps you’re wondering whether our hypothetical frontend could just pre-connect to all possible backend tasks upon startup? Sure, it could, but let’s pretend that there are good reasons that it doesn’t. In any case, the same principles here can also apply to other areas like cache warming, for example, so let’s just go with the maths for now. ↩

-

We can actually compute a closed-form approximation of the expected value by way of harmonic numbers. It turns out that the exact value we want is $n H_n$, where $H_n$ is the nth harmonic number. We can approximate $H_n$ as $\ln{n} + \gamma + \frac{1}{2n}$, where $\gamma$ is Euler’s constant, approximately $0.577$, and so multiplying that approximation of $H_n$ by $n$ produces a reasonable approximation of the expected value for the coupon collector’s problem. ↩